NSF Customer Experience Research

National Science Foundation — Enterprise CX Assessment

The Problem

In December 2021, Executive Order 14058 (opens in new tab) directed federal agencies to transform customer experience and rebuild public trust. The EO framed every interaction between the government and the public as an opportunity to reduce "time taxes" — the cumulative burden of navigating fragmented, compliance-oriented processes.

NSF's Division of Administrative Services (DAS) — responsible for facilities, security, IT, records, and building operations — needed to understand how its 200+ internal services were experienced by NSF staff before it could improve them. No one had a complete picture. Services were requested through a patchwork of email aliases, SharePoint forms, walk-up desks, and personal relationships. There were no consistent feedback mechanisms, no unified service catalog, and 50% of the workforce was eligible for retirement within three years.

The Approach

I joined a 5-person CX research team at Informed XP tasked with assessing DAS's entire service operation. The work began with baseline stakeholder interviews with branch leadership to map each branch's services, customers, pain points, and accountability structures. These interviews surfaced a recurring tension: DAS staff saw themselves as mission-critical partners, but the rest of NSF often experienced them as compliance obstacles.

I helped build the research repository that became the project's analytical backbone: a structured inventory of services mapped, internal systems cataloged, existing materials reviewed, and external sources benchmarked against DAS operations.

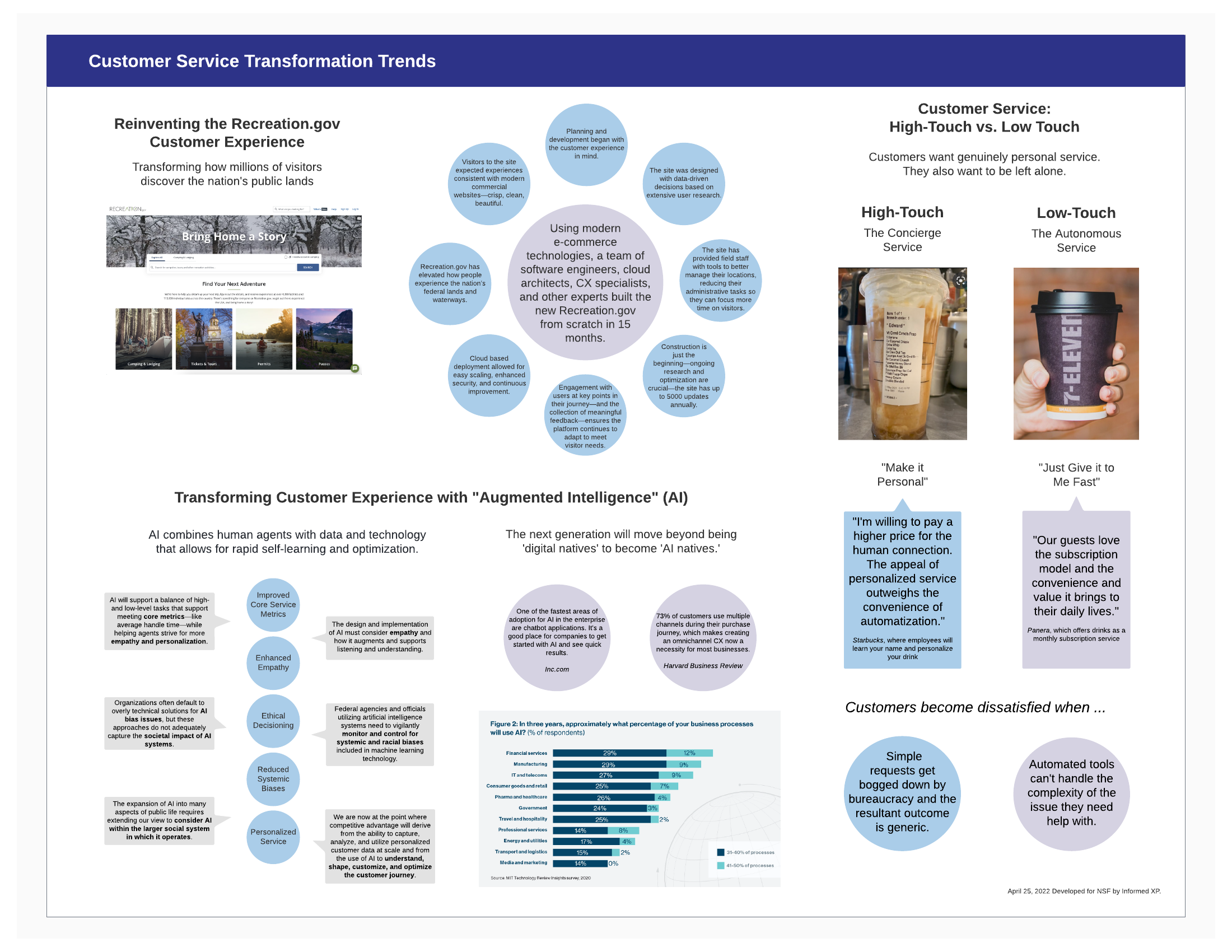

I also led external research and trends reports synthesizing sources and federal case studies into strategic briefs for NSF leadership. These reports served a dual purpose: they gave DAS leadership concrete models to reference — how other agencies were renaming "administrative services" as "mission support," how the CIA had experimented with internal marketplace competition for admin services, and how the service-profit chain connected employee satisfaction to customer outcomes — and they grounded our recommendations in established precedent rather than abstract theory.

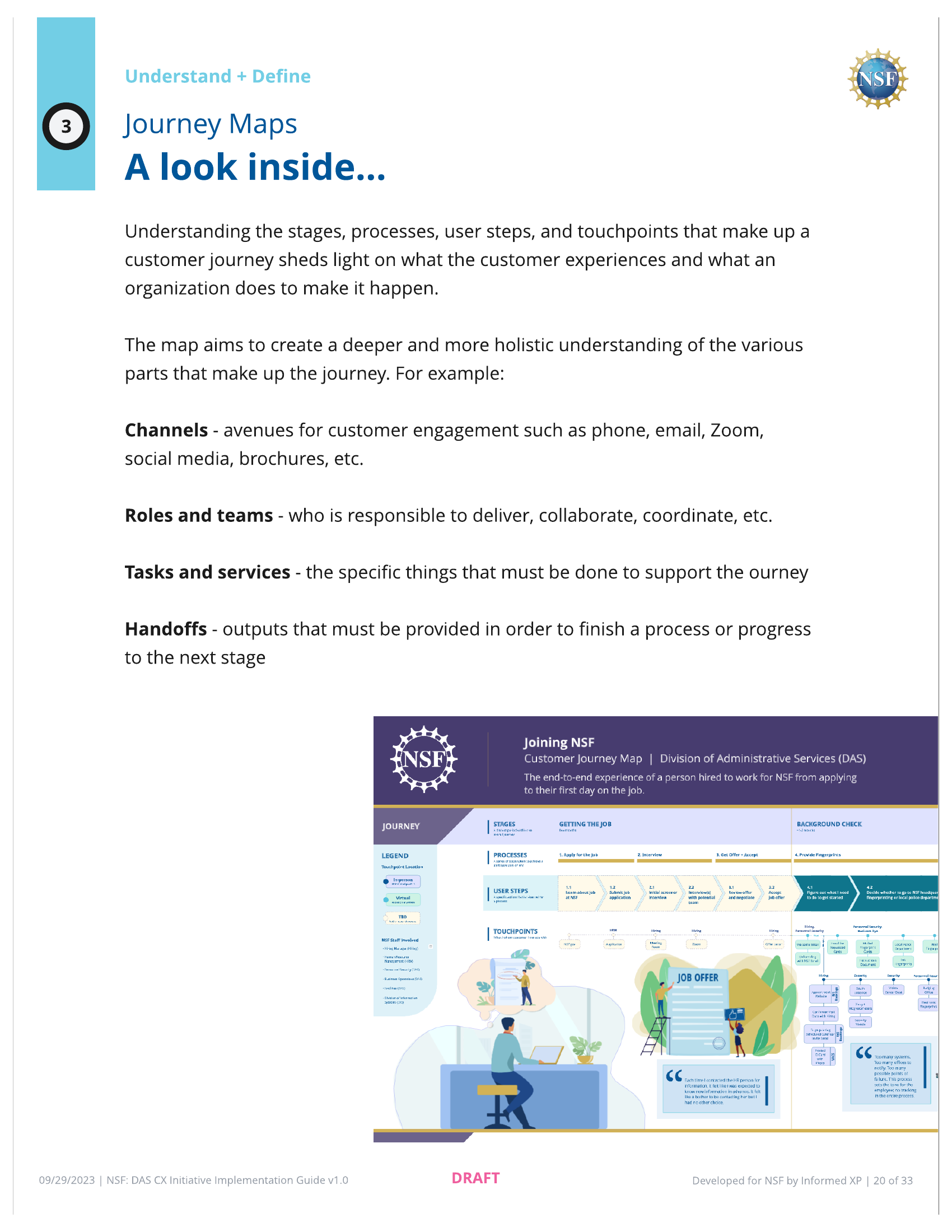

Survey data, combined with interview transcripts and workshop outputs, fed into a 6-step CX framework the team developed: User Typology, Service Model, Journey Maps, CX Criteria, CX Scorecard, and Implementation Roadmaps.

Over four task orders, the work progressed from discovery research through framework development to operational scorecards — the 5 CX criteria we defined became the measurement structure used to evaluate individual services quarterly, tracking metrics, customer sentiment, and dependencies across DAS branches. I departed during the fourth task order in April 2024.

Reflection

This project taught me how organizational research differs from product research. In product work, you're trying to understand how someone uses a tool. In organizational CX, you're trying to understand how a system sees itself — and the gap between that self-image and how it's experienced. Across interviews, we kept finding teams that genuinely cared about service but had no way to know whether that effort was landing. That gap between effort and perceived value was the real finding, and no amount of service cataloging would have surfaced it without the interview work underneath.